Performance engineering

Company-wide parser performance rewrite

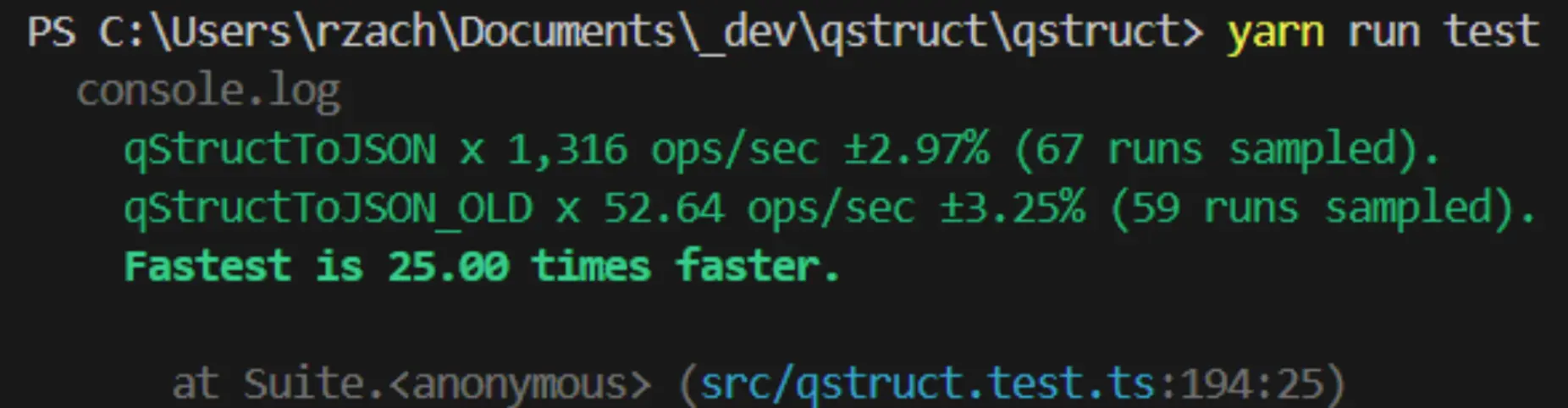

A shared parser added seconds to large medical-service queries. I benchmarked the bottleneck, replaced expensive merge behavior with map-based lookup, and moved the parser toward predictable linear work.

Key metrics

- Representative workload

- 25s to 1s

- Measured parser speedup

- 25x faster

- User-facing load time

- about 80% lower

Problem

Large medical-service responses were slowed down by a QStruct-to-JSON conversion path. The work looked like a parser problem, but the production symptom was broader: users waited several extra seconds for responses that should have been routine.

The slow path repeatedly merged arrays and created new objects while processing growing data sets. That made larger cases disproportionately expensive.

Approach

- Built repeatable benchmarks around a representative 4 KB QStruct content field from a standard service-recording entry.

- Replaced repeated lodash array merge work with map-based constant-time lookup and direct append behavior for existing keys.

- Swapped slower regular-expression execution paths for faster native JavaScript regular-expression handling where it fit the parser contract.

- Kept the rewrite focused on the shared library so every consuming workflow could benefit without duplicating optimizations.

Why it mattered

The improvement was not only a faster function. It removed a company-wide bottleneck from healthcare workflows where large cases are normal, reduced server response time, and made the shared parser safer for future growth.